Feedback from an AI-driven tool improves teaching, Stanford-led research finds

Artificial intelligence is rapidly transforming education, in both worrisome and beneficial ways. On the positive side of the ledger, new research shows how AI can help improve the way instructors engage with their students, by way of a cutting-edge tool that provides feedback on their interactions in class.

A new Stanford-led study, published May 8 in the peer-reviewed journal Educational Evaluation and Policy Analysis, found that an automated feedback tool improved instructors’ use of a practice known as uptake, where teachers acknowledge, reiterate, and build on students’ contributions. The findings also provided evidence that, among students, the tool improved their rate of completing assignments and their overall satisfaction with the course.

For instructors looking to improve their practice, the tool offers a low-cost complement to conventional classroom observation – one that doesn’t require an instructional coach or other expert to watch the teacher in action and compile a set of recommendations.

“We know from past research that timely, specific feedback can improve teaching, but it’s just not scalable or feasible for someone to sit in a teacher’s classroom and give feedback every time,” said Dora Demszky, an assistant professor at Stanford Graduate School of Education (GSE) and lead author of the study. “We wanted to see whether an automated tool could support teachers’ professional development in a scalable and cost-effective way, and this is the first study to show that it does.”

GSE Assistant Professor Dora Demszky

Promoting effective teaching practices

Recognizing that existing methods for providing personalized feedback require significant resources, Demszky and colleagues set out to create a low-cost alternative. They leveraged recent advances in natural language processing (NLP) – a branch of AI that helps computers read and interpret human language – to develop a tool that could analyze transcripts of a class session to identify conversational patterns and deliver consistent, automated feedback.

For this study, they focused on identifying teachers’ uptake of student contributions. “Uptake is key to making students feel heard, and as a practice it’s been linked to greater student achievement,” said Demszky. “But it’s also widely considered difficult for teachers to improve.”

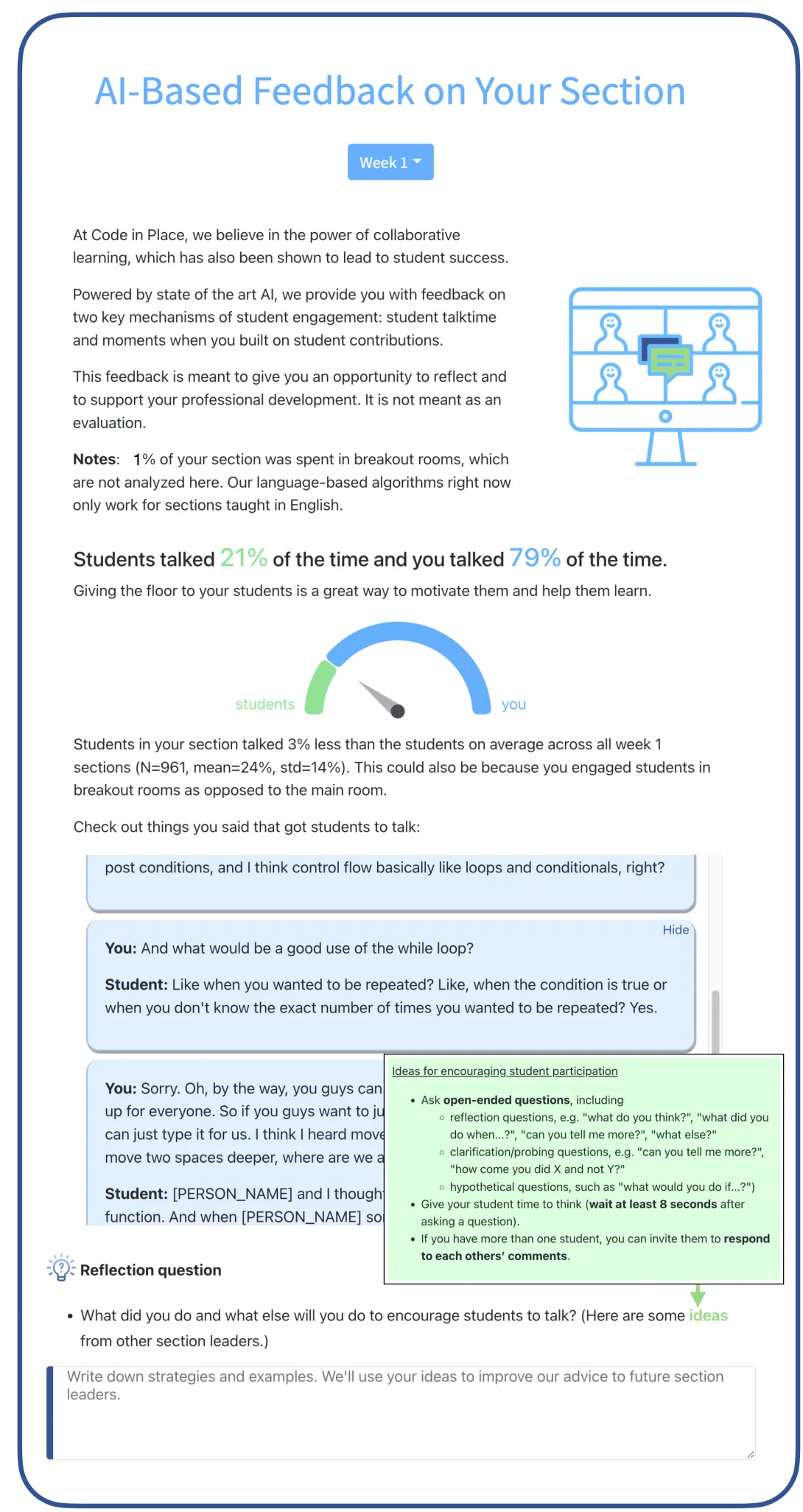

The researchers trained the tool, called M-Powering Teachers (the M stands for machine, as in machine learning), to detect the extent to which a teacher’s response is specific to what a student has said, which would show that the teacher understood and built on the student’s idea. The tool can also provide feedback on teachers’ questioning practices, such as posing questions that elicited a significant response from students, and the ratio of teacher/student talk time.

The research team put the tool to work in the Spring 2021 session of Stanford’s Code in Place, a free online course now in its third year. In the five-week program, based on Stanford’s popular introductory computer science course, hundreds of volunteer instructors teach basic programming to learners worldwide, in small sections with a 1:10 teacher-student ratio.

The M-Powering Teachers tool provides feedback with examples of dialogue from the class to illustrate supportive conversational patterns. (Click on image to enlarge)

Code in Place instructors come from all sorts of backgrounds, from undergrads who’ve recently taken the course themselves to professional computer programmers working in the industry. Enthusiastic as they are to introduce beginners to the world of coding, many instructors approach the opportunity with little or no prior teaching experience.

The volunteer instructors received basic training, clear lesson goals, and session outlines to prepare for their role, and many welcomed the chance to receive automated input on their sessions, said study co-author Chris Piech, an assistant professor of computer science education at Stanford and co-founder of Code in Place.

“We make such a big deal in education about the importance of timely feedback for students, but when do teachers get that kind of feedback?” he said. “Maybe the principal will come in and sit in on your class, which seems terrifying. It’s much more comfortable to engage with feedback that’s not coming from your principal, and you can get it not just after years of practice but from your first day on the job.”

Instructors received their feedback from the tool through an app within a few days after each class, so they could reflect on it before the next session. Presented in a colorful, easy-to-read format, the feedback used positive, nonjudgmental language and included specific examples of dialogue from their class to illustrate supportive conversational patterns.

The researchers found that, on average, instructors who reviewed their feedback subsequently increased their use of uptake and questioning, with the most significant changes taking place in the third week of the course. Student learning and satisfaction with the course also increased among those whose instructors received feedback, compared with the control group. Code in Place doesn’t administer an end-of-course exam, so the researchers used the completion rates of optional assignments and course surveys to measure student learning and satisfaction.

Testing in other settings

Subsequent research by Demszky with one of the study’s coauthors, Jing Liu, PhD ’18, studied the use of the tool among instructors who worked one-on-one with high school students in an online mentoring program called Polygence. The researchers, who will present their findings in July at the 2023 Learning at Scale conference, found that on average the tool improved mentors’ uptake of student contributions by 10%, reduced their talk time by 5%, and improved students’ experience with the program as well as their relative optimism about their academic future.

Demszky is currently conducting a study of the tool’s use for in-person, K-12 school classrooms, and she noted the challenge of generating the high-quality transcription she was able to obtain from a virtual setting. “The audio quality from the classroom is not great, and separating voices is not easy,” she said. “Natural language processing can do so much once you have the transcripts – but you need good transcripts.”

She stressed that the tool was not designed for surveillance or evaluation purposes, but to support teachers’ professional development by giving them an opportunity to reflect on their practices. She likened it to a fitness tracker, providing information for its users’ own benefit.

The tool also was not designed to replace human feedback but to complement other professional development resources, she said.

Along with Dora Demszky, Jing Liu, and Chris Piech, the study was co-authored by Dan Jurafsky, a professor of linguistics and of computer science at Stanford, and Heather C. Hill, a professor at the Harvard Graduate School of Education.

Faculty mentioned in this article: Dora Demszky